Model-Internal Intelligence

Look inside AI. Unlock hidden signals.

NeuronLens discovers internal concepts and turns them into deployable lenses for safety, agents, model repair, and domain intelligence.

Not just what models say - what forms inside them.

The surface shows symptoms. The inside holds answers.

Prompts, outputs, and traces capture what a model said. They rarely surface the internal signals that caused the behavior - or the latent knowledge waiting to be discovered.

Outputs are shallow

Final answers hide the concepts that shaped them.

Failures stay one-off

Teams patch incidents instead of creating reusable controls.

Signals stay trapped

Useful model knowledge remains inside the model.

Surface signals show symptoms. Internal signals reveal mechanisms.

Discover. Control. Design.

Discover

Find internal features, concepts, and hidden signals

Every discovery becomes evidence you can act on, explain, and trust.

Control

Monitor and intervene during runtime

Internal signals become policy gates before failure fires.

Design

Repair and design models through internal evidence

Target the source behavior, not just the output symptom.

Control this run. Design future runs.

Products

Product Verticals

Runtime Lens

Deploy validated signals into live safety and agent workflows.

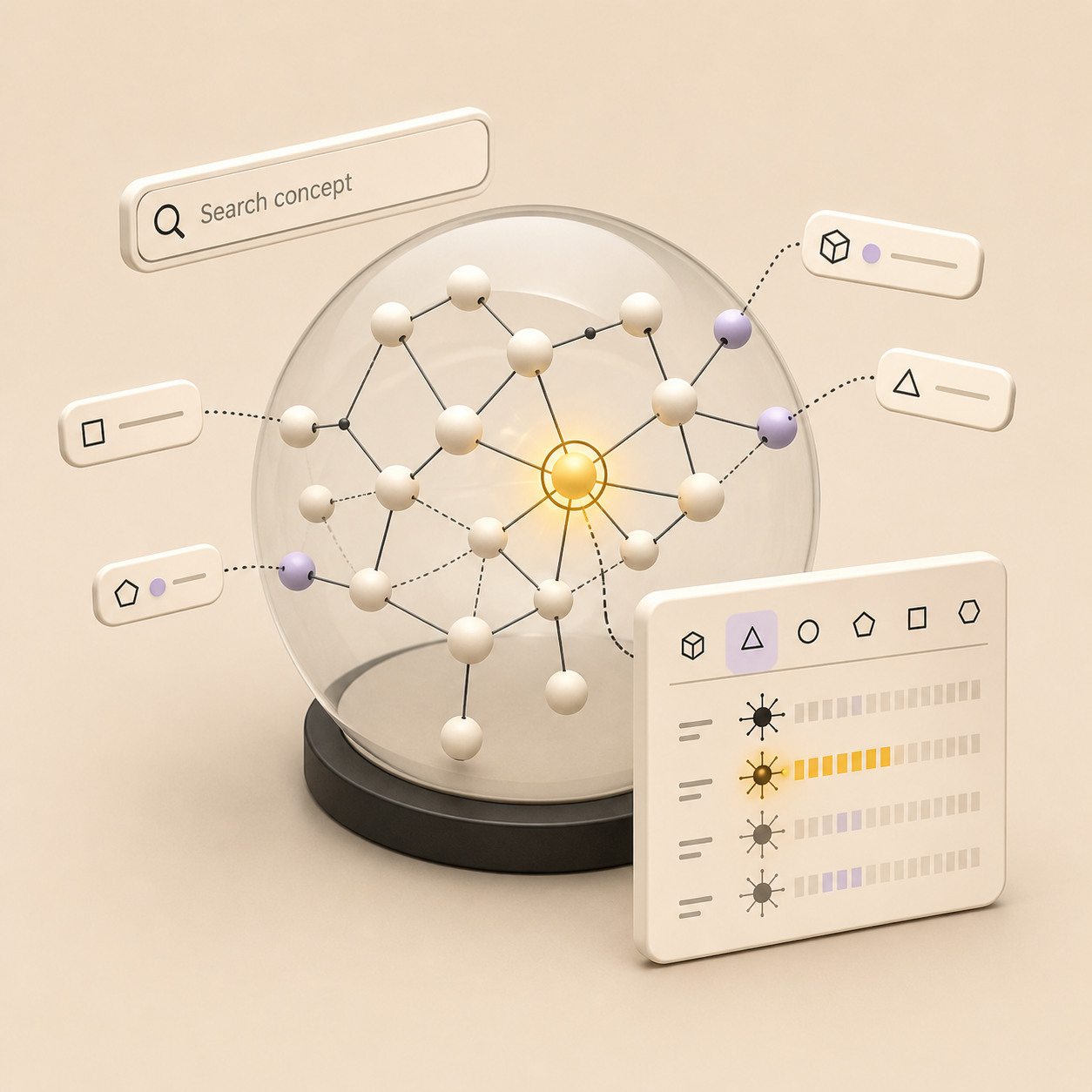

Concept Studio

Discover and inspect internal model concepts.

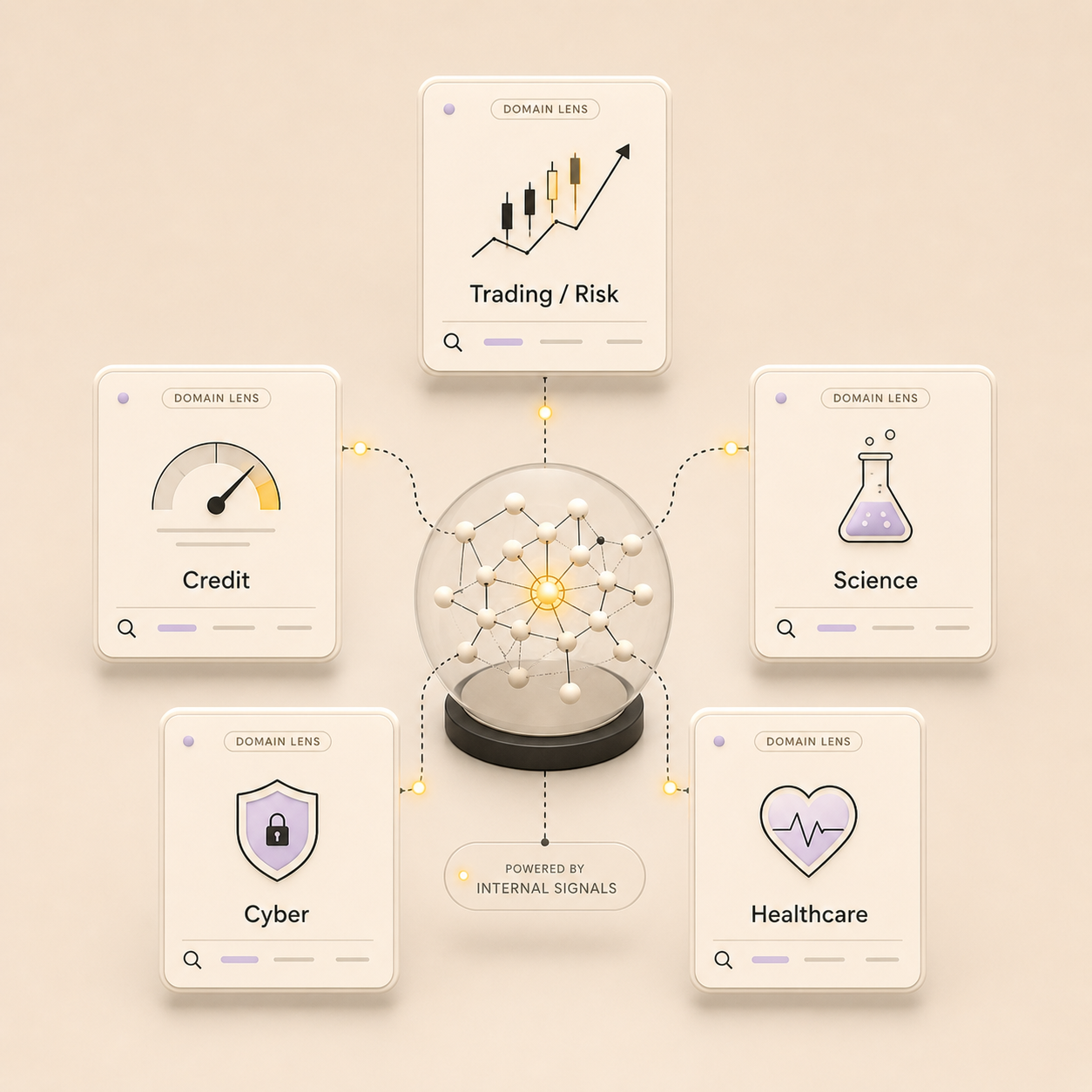

Domain Lenses

Package internal signals into specialized products for high-stakes domains.

Model Design Studio

Fix recurring model failures with targeted internal repair.

Powered by a research engine for activation analysis, sparse features, probes, and causal validation.

Our Research

Technical Explorations

Deep dives into the mechanics of neural representations.

Mapping the Concept Space Inside Language Models

How we used sparse features, auto-interpretability, and steering experiments to turn hidden activations into searchable, testable, and steerable signals.

Training Sparse Autoencoders That Produce Useful Features: A Practical Guide

A practical guide to training sparse autoencoders across model families, covering frameworks, failure modes, evaluation metrics, and case studies.

Labeling domain specific features of Sparse Autoencoders: Practical Strategies

From feature discovery to label fidelity in large language models (Nemotron + Llama case study)

From the Team

Essays, opinions, and updates on interpretability, AI safety, and what we're building.

Interpretability as Runtime Governance

From "Understanding Neurons" to Measurable Control Systems

Why Interpretability Stalled - and How It Gets Unstuck

The real bottlenecks that slowed interpretability progress, and what's quietly starting to change.

The Illusion of Chain-of-Thought Transparency

Why reading reasoning text tells you very little about what models actually think.

See what's driving your model.

Get early access to NeuronLens. We work with a small number of research and enterprise teams - reach out to start a conversation.